We are now entering the real of software testing, which is something that has been mentioned a lot of my previous blog posts but now we are going to enter completely on the topic.

Before starting, we are going to define testing as validating that a piece of software meets its business and technical requirements.

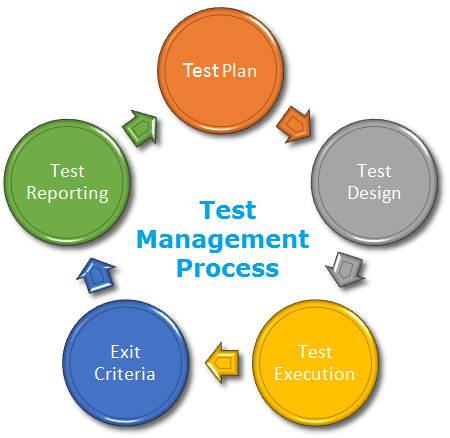

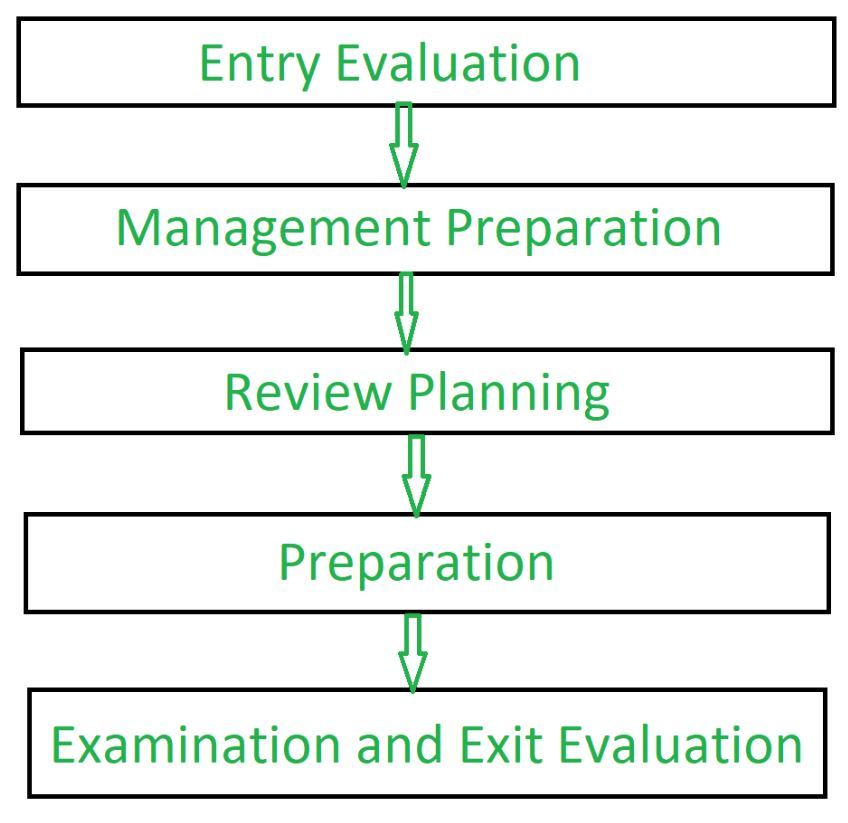

Testing Process

The process of software test depends a lot of the company or individual, because depends on the workflow that is used, but I found this blog post by Url Eriksson that explains a general overview in 4 key elements:

#1 Test Strategy and Test Plan

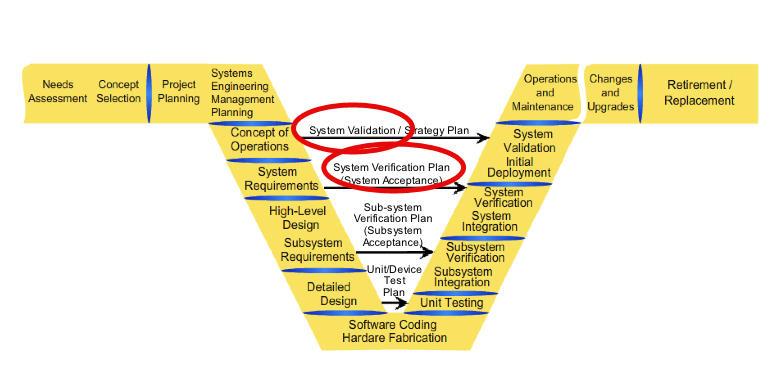

I mentioned about testing plan in my Software Verification and Validation blog post, this plan comes at the start of our project while we are setting up the requirements and we improve or make changes when we have a deeper view of the system during the detailed design. Some of the elements that we need to take into account are the following:

- Features and functions that are the focus of the project

- Non-functional requirements

- Key processes to follow – for defects resolution, defects triage

- Tools—for logging defects, for test case scripting, for traceability

- Documentation to refer, and to produce as output

- Test environment requirements and setup

- Risks, dependencies and contingencies

#2 Test Design

One important thing that we do not take much into consideration while we code is our test cases, this can backfire while we are creating our unit tests and do not have a proper way to test the functionality, one thing that we can do to avoid this situation is to design and plan our test accordingly, or even sometimes you can have a Test Driven Development approach.

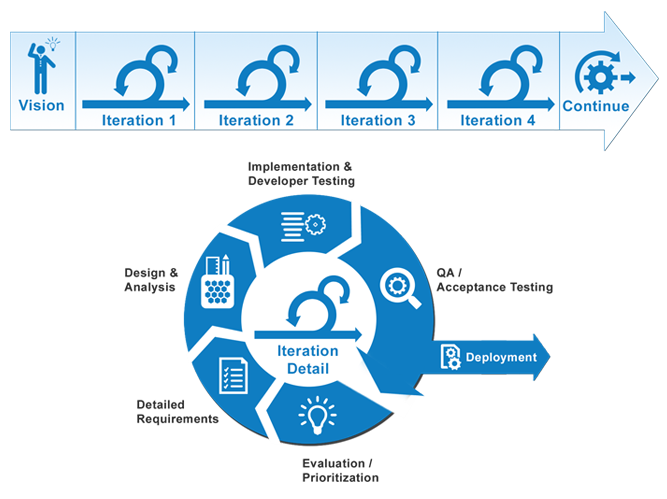

#3 Test Execution

The way the test are executed depends a lot of the methodology of the life cycle that you are following to develop your code, can be a single waterfall integration test, or multiple tests every Agile sprint, or so on, at the end, your end goal is having enough tests to make sure that the system is relatively bug-free and changes do not break key components.

One of the focuses during the execution is “What is the environment that we are running these tests on?”, one example that is really common is on Mobile Development, we have a lot of set of screens and devices that go from low to high end, and need to make sure that an application work on these. On my experience, while I had done web development work at Google, we had tests that make sure that our application runs correctly on different browsers.

#4 Test Closure

To finish up our test we need to make sure that we have a green light for the test, for this, metrics are established in the company or team, some examples are as follows:

- 100% requirements coverage.

- Minimum percentage of tests passed.

- Critical defects to be fixed.

- Percentage of lines of code covered by test cases.

It all depends on what the company and the stakeholder’s establish as the requirement, as a rule of thumb, the minimum percentage of tests passed is always above 90%.

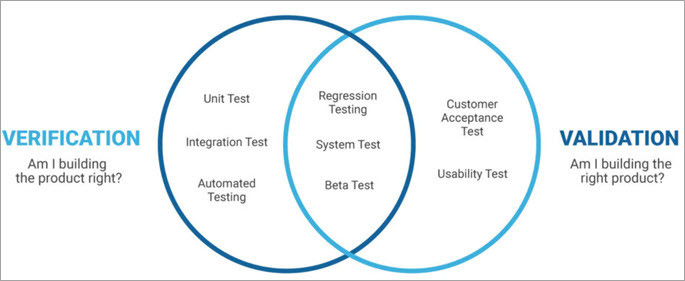

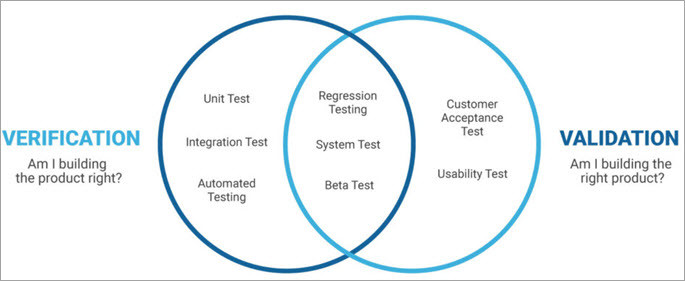

Types and levels of testing

Types

There are many types of test that we can do to make sure that changes do not break our software, Sten Pittet has a great article where he talks about some types, we are going to talk about them here:

- Unit Testing: Tests individual functions or methods.

- Integration Testing: Verify that services or different modules together behave correctly.

- Functional Testing: Verify that the output of an action gives the correct result, doesn’t matter what happened before.

- End-to-end Testing: Replicates an user behavior in a complete software environment.

- Acceptance Testing: Verifies that the system meets business requirements. Mostly done to end users.

- Performance Testing: Checks the system when it’s under heavy stress to see how it behaves.

- Smoke Testing: Quick tests that check basic functionality. Normally done after you deploy an application in a new environment.

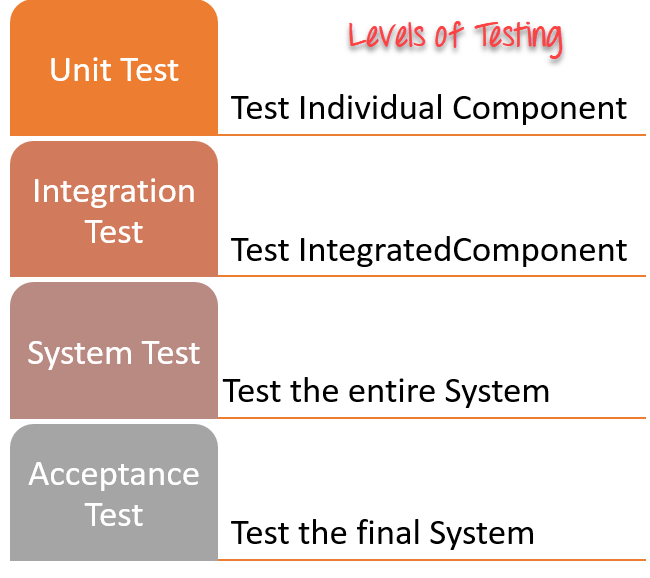

Levels

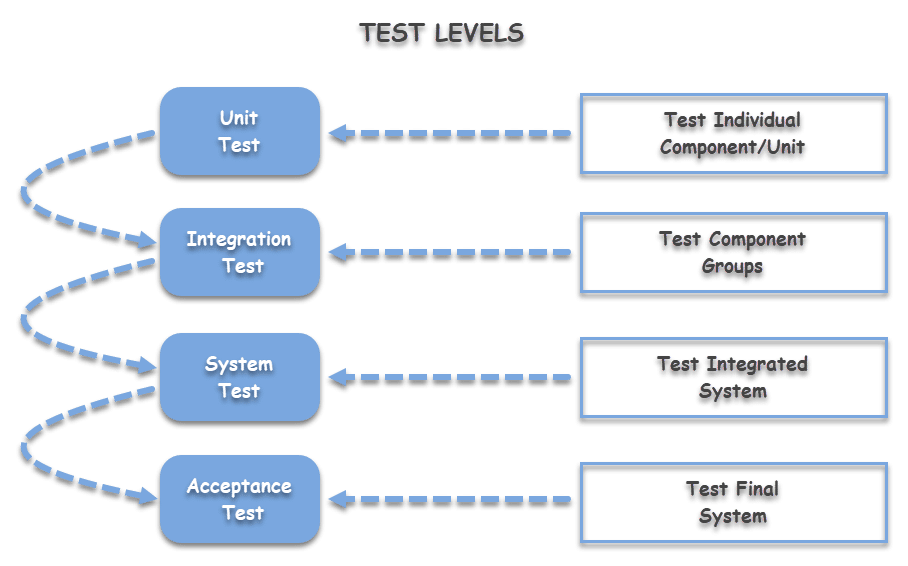

We can find four levels of testing, that ToolsQA describes on this blog post as follows:

- Unit Testing: Checks if the software components are fulfilling it’s duty. (Level 1)

- Integration Testing: Checks the data flow from different components of the system. (Level 2)

- System Testing: Evaluates all the functional and non-functional needs of the system. (Level 3)

- Acceptance Test: Checks the requirements of the stakeholder’s are met. (Level 4)

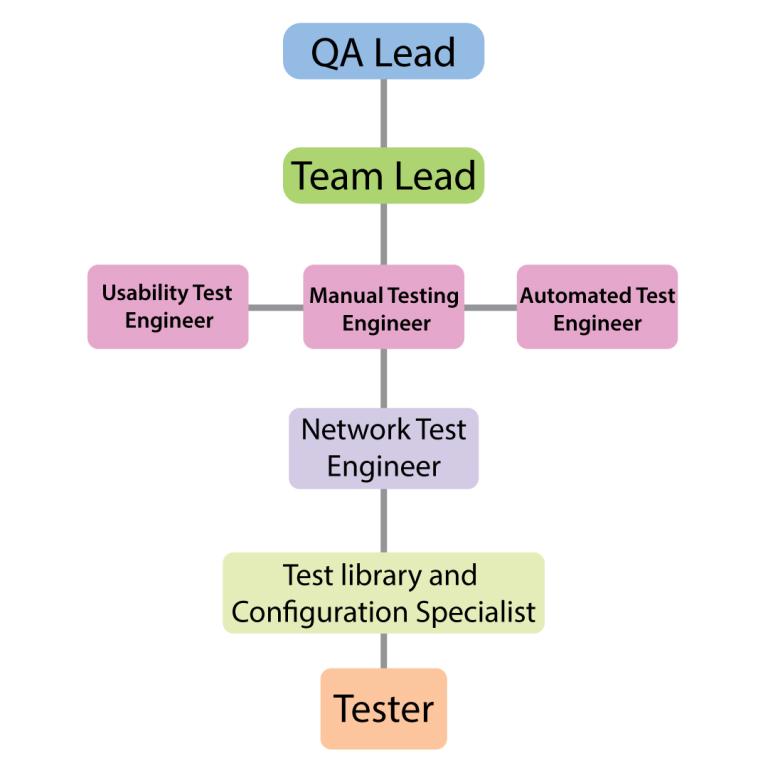

Activities and Roles of Testing

In every software company, there is a some people that is in charge of the testing. In a blog post by Test Institute. I found 2 of the most important roles that you can find in teams at professional environments.

Test Lead / Manager

As the name suggest, this person functions as the team leader of the testing environment, his/her key activities are:

- Defining the testing activities for testers.

- All responsibilities of test planning.

- Allocate resources for the testing.

- Prepare the status report of testing activities.

- Interacts with customers and stackholders.

- Updating project manager regularly about the progress of testing activities.

Test Engineers / QA testers

These are the subordinates of the test lead, the key activities are:

- Read all the documents and understand what needs to be tested.

- Based on the information procured in the above step decide how it is to be tested.

- Inform the test lead about what all resources will be required for software testing.

- Develop test cases and prioritize testing activities.

- Execute all the test case and report defects, define severity and priority for each defect.

- Carry out regression testing every time when changes are made to the code to fix defects.

Something important to keep in mind is that on some companies, software developers also work as test engineers sometimes, if there is no dedicated team for it.

Testing environments

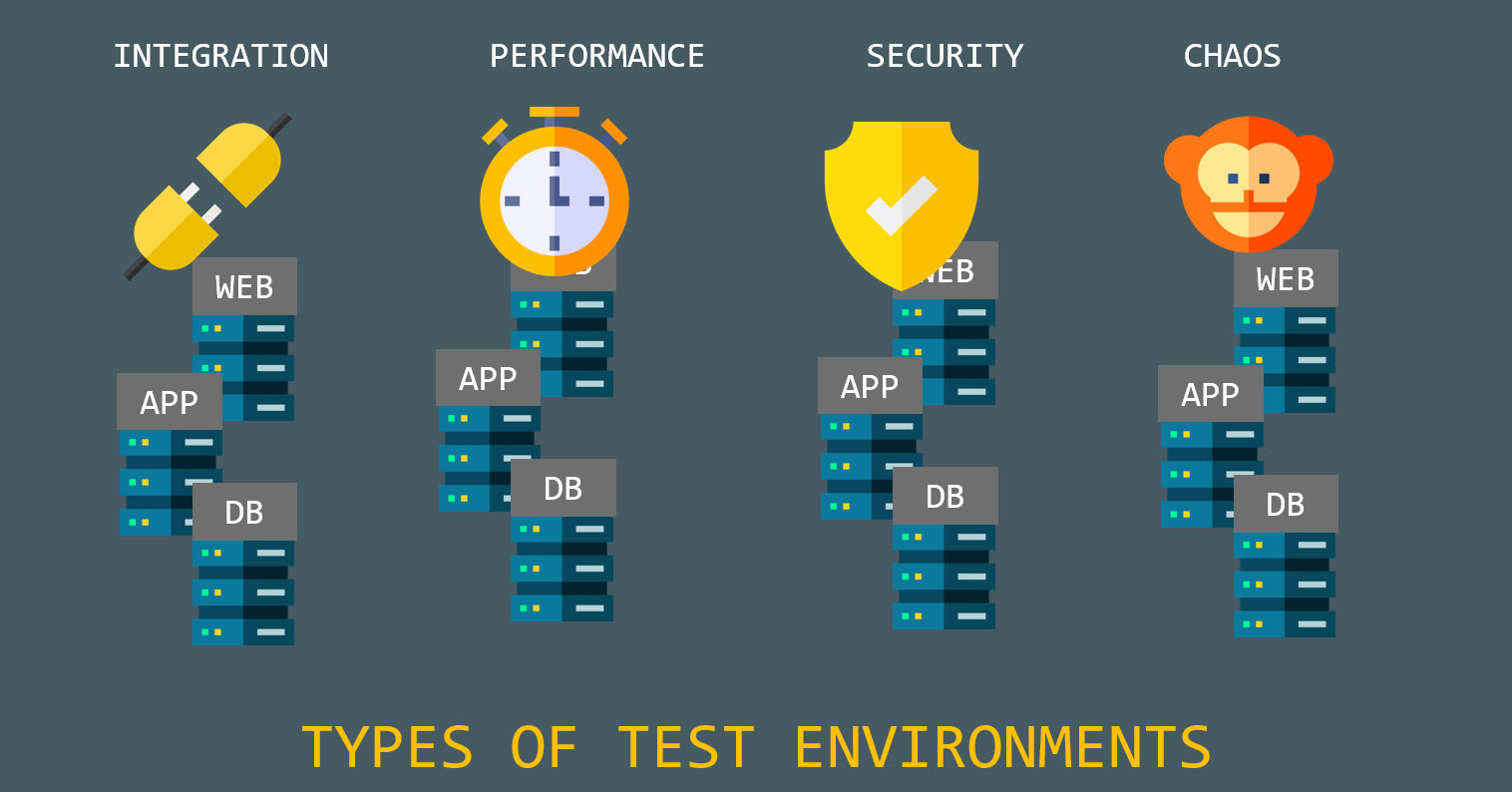

What are testing environments exactly? Well, remember that previously we talk about all these types of tests? Now, we need to know where to run them, and also, in large production environments, they are mostly automated, for these, you need to have an environment where they can run, and that’s what we are talking about today. There are a lot different tests that can be done, and the types of test environment that we can have depends a lot on the author, so we are going to focus on the ones on this blog post by Temdotcom.

Integration Testing Environment

We talk about integration tests before, to set them up, we need to have a complete environment where we can run it, since we need to run multiple components at the time, and then make them communicate to make sure everything is working correctly.

Normally, a team will have a set of integration tests to run, and it all comes down to having the hardware, software and right configuration to be able to run the tests.

Performance Testing Environment

This environment is to determine how the system behaves in some situations, and verify their response time, stability and other factors that need to be determined based on the requirements.

These tests are quite variable and sometimes complex to determine, some of the things that need to be setup during these tests are:

- Number of CPUs.

- Number of concurrent users.

- Size of RAM.

- Data volume.

Security Testing Environment

When working with software where security is critical, security teams want to make sure that the software does not have potential vulnerabilities or flaws in different areas. Normally these are setup by security experts.

First of all, they set a base case for normal vulnerabilities that can happen on the environment of the software, for example, SQL Injection. Once this tests are done, the security experts focus on more in depth test cases that require knowledge of how this particular system works.

Chaos Testing Environment

Chaos Engineering is a whole other aspect to learn about, the main goal that we are trying to focus is to test our system when the worst possible scenario can happen, for example, let’s say that Google authentication is gone and your application relies on that, what would happen? One of the most crucial scenarios is a web application with microservice architecture.

Test Case Design techniques

We talked before on this blog post about designing our tests, in which we can use a set of useful techniques that allow is to plan and think about our test cases.

Black-box techniques

- Boundary Value Analysis: Explore errors on input domain. Catches any input that might break the system.

- Equivalence Partitioning: The input data is set into multiple different classes and then testing all of that data, reducing the number of test cases.

- Decision Table Testing: The test are based on decision tables (duh), and compared with the different combinations.

- State Transition Diagrams: This tests are based on State Machines with a finite number of states, the transitions are based on rules that depend on the input.

- Use Case Testing: The test cases are based on business scenarios and end user functionalities. These cover the entire system.

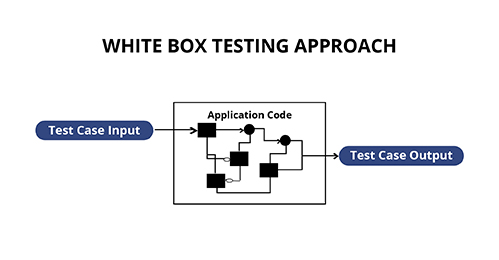

White-box techniques

- Statement Testing and Coverage: As the name suggest, is testing coverage of the test cases, which means the percentage of all available methods/functions that the source code contains that are covered by tests. The acceptable percentage is given by the client or per business preferred metric.

- Decision Testing Coverage: This testing technique covers all the possible combination of cases that will test all the branches that are part of the code.

- Condition Testing: It covers a 100% of the code, and all expression expect true or false. The testing outcome is designed to be fast.

- Multiple Condition Testing: Same as the condition testing but with more than two possible conditions. More scripts are required, hence more effort.

- All Path Testing: The source of the program is leveraged to find every executable path. This can help determine faults on the code.

Managing Bugs

Once we have our application running, doesn’t matter the amount of testing that we do, as the application gets bigger, the user will always find a way to break the program in some way, and part of our job it’s to fix it, the problem is, how do we know about that bug?

One of the main ways to do this is through bug reports, which is a detailed way to log and notify developers about the defects that are present on the artifacts, while we encounter these they need to have meaningful information that helps us solve the problem. Guru99 has a great blog post that contains the following fields:

- Defect ID: Unique identification number.

- Defect Description: Detailed description of the defect including information about the module in which was found.

- Version: Version of the application in which was found.

- Steps: Detailed steps along with screenshots with which the developer can reproduce the defects.

- Date Raised: Date when the defect is raised

- Reference– Provide reference of documents such as requirements, design, architecture or maybe even screenshots of the error to help understand the defect

- Detected By – Name/ID of the tester/user who raised the defect

- Status – Current status of the defect.

- Fixed by – Name/ID of the developer who fixed it

- Date Closed – Date when the defect is closed

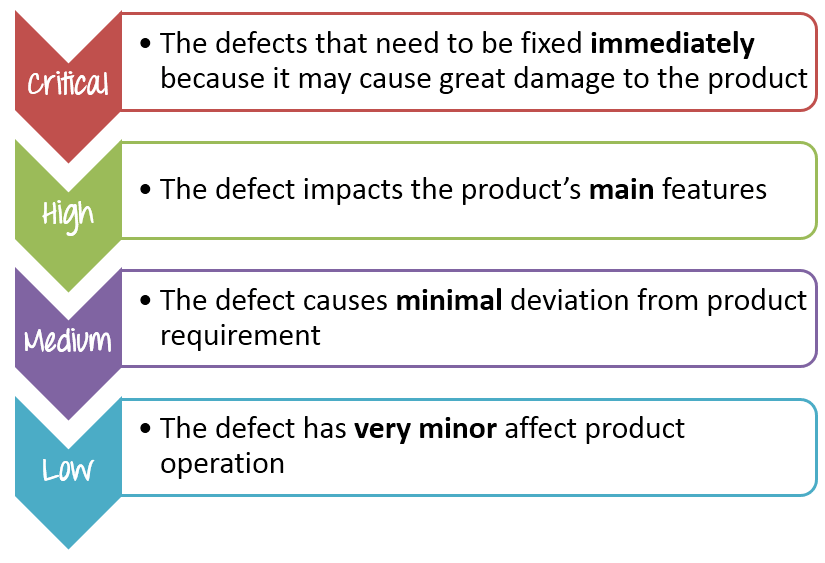

- Severity which describes the impact of the defect on the application

- Priority which is related to defect fixing urgency. Severity Priority could be High/Medium/Low based on the impact urgency at which the defect should be fixed respectively

All these information can be filled by either users or testers, depending on the way it is sent. Often a feature in the application is built to be able to log the information, for example, a simple form in a web application.

Final Thoughts

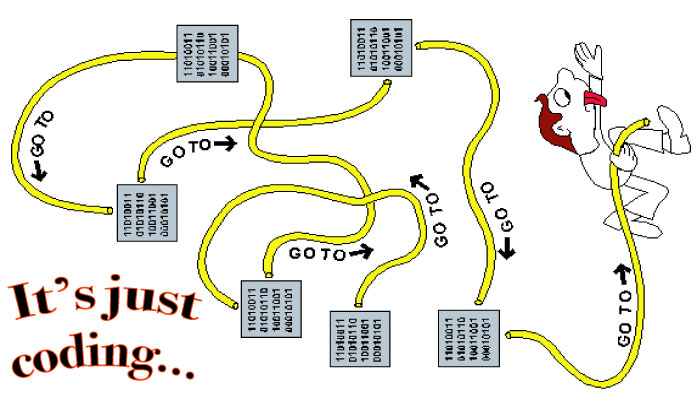

The world of software testing is huge, with a lot of places to focus on and a lot of different techniques that we can use. One of the main things I have learnt during my professional experience is that testing takes more time than what you take to code a feature, since there are a lot of factors that you need to take care of on large production environments.

One of the most important things I can recommend is to NOT write many tests for something that is not necessary, sometimes you might think that over engineering your code might be great, but sometimes can backfire and overshadow bugs that can happen during product. The best advice, be smart about your tests.