--Originally published at TC2027 – Enro Blog

A Pollinator in Pink……..HFDF! flickr photo by The Manic Macrographer shared under a Creative Commons (BY) license

The problem of constructing a decision tree can be expressed recursively. First, select an attribute to place at the root node and make one branch for each possible value. This splits up the example set into subsets, one for every value of the attribute. Now the process can be repeated recursively for each branch, using only those instances that actually reach the branch. If at any time all instances at a node have the same classification, stop developing that part of the tree.

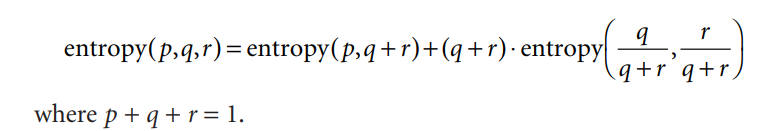

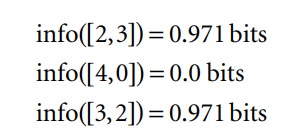

The only thing left to decide is how to determine which attribute to split on, given a set of examples with different classes. If we had a measure of the purity of each node, we could choose the attribute that produces the purest daughter nodes. The measure of purity that we will use is called the information and is measured in units called bits. Associated with a node of the tree, it represents the expected amount of information that would be needed to specify whether a new instance should be classified yes or no, given that the example reached that node.

For outlook We can calculate the average information value of these, taking into account the number of instances that go down each branch—five down the first and third and four down the second:

This average represents the amount of information that we expect would be necessary to specify the class of a new instance, given the tree structure in Figure 4.2(a) Before we created any of the nascent tree structures in Figure 4.2, the training examples at the root comprised line yes and five no nodes, corresponding to an information value of

Thus