--Originally published at Fernando Partida's Blog

This DevOps assignment is probably the most compicated of them all as it was a culmination of everything done in the previous DevOps. First of all I used pyTest as my unit testing tool as it was the easiest to install and use in my virtual machine. Then for all the gitHub stuff I used my pytesting-Quality repository.

First I created a script in python that creates a local webserver that reads the index.html file located at my scripts root folder. This file is constanly overwritten, and the code for the server is the following:

import http.server

import socketserver

PORT = 8080

Handler = http.server.SimpleHTTPRequestHandler

with socketserver.TCPServer(("", PORT), Handler) as httpd:

httpd.serve_forever()

Then I created another python string in charge of testing the pytest_test.py file and seeing if it throws a “.” if the test succeeds or “F” if it fails. Then it updates the index.html file, with the status of the test. After doing so, the program updates the README.md file according to the status. Finally the script adds, commits with message and pushes the pytest_test.py and README.md files. The following is the code for doing so:

import subprocess

c = subprocess.check_output('pytest ~/PycharmProjects/pytest_test/pytest_test.py |grep "pytest_test.py "', shell=True)

s = c.split(b' ')[1].decode("utf-8")

if (s=="."):

subprocess.call('echo "pytest test passed" > index.html', shell=True)

subprocess.call('echo "# pytest test passed" > ~/PycharmProjects/pytest_test/README.md', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test add pytest_test.py',shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test commit -m "pytest_test updated"', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test add README.md', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test commit -m "README.md updated"', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test push origin master', shell=True)

else :

subprocess.call('echo "pytest test failed" > index.html', shell=True)

subprocess.call('echo "# pytest test failed" > ~/PycharmProjects/pytest_test/README.md', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test add pytest_test.py',shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test commit -m "pytest_test updated"', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test add README.md', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test commit -m "README.md updated"', shell=True)

subprocess.call('git -C ~/PycharmProjects/pytest_test push origin master', shell=True)

Now finally for the pièce de résistance the crontab file was updated so that it runs the server continuously, and the tester on every pc reeboot. The following is teh code obtained from running the sudo crontab -l command.

# Edit this file to introduce tasks to be run by cron.

#

# Each task to run has to be defined through a single line

# indicating with different fields when the task will be run

# and what command to run for the task

#

# To define the time you can provide concrete values for

# minute (m), hour (h), day of month (dom), month (mon),

# and day of week (dow) or use '*' in these fields (for 'any').#

# Notice that tasks will be started based on the cron's system

# daemon's notion of time and timezones.

#

# Output of the crontab jobs (including errors) is sent through

# email to the user the crontab file belongs to (unless redirected).

#

# For example, you can run a backup of all your user accounts

# at 5 a.m every week with:

# 0 5 * * 1 tar -zcf /var/backups/home.tgz /home/

#

# For more information see the manual pages of crontab(5) and cron(8)

#

# m h dom mon dow command

* * * * * /usr/bin/python3 /home/ferpart/Documents/Scripts/server.py

@reboot /usr/bin/python3 /home/ferpart/Documents/Scripts/gitPusher.py

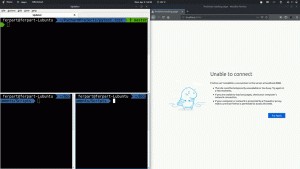

The following is a GIF showing everything working. (Obviously not using crontab at the moment, as it wouldn’t update that quickly).