--Originally published at Hackerman's house

Lisp: Good News, Bad News, How to Win Big is an essay written by the American computer scientist Richard P. Gabriel in 1991. At that time, he worked a lot on the Lisp programming language. The main topic of the essay is the advantages and disadvantages of that programming language, and how accepted it was by the programmers, as well as some of its uses.

Lisp’s successes

Standardization

One of the biggest successes in Lisp is that there is a standard Lisp called Common Lisp. This wasn’t considered the best Lisp but it was the one ready to be standardized. There was a Common Lisp committee formed by users of different Lisps across the United States; Common Lisp was a coalescence of the Lisps these people cared about.

Performance

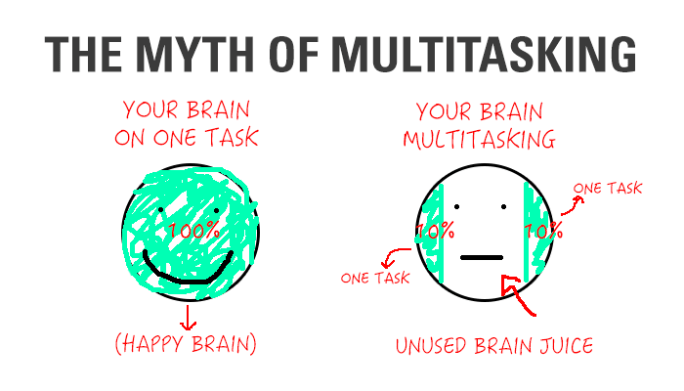

It used modern compiler technology of that time, so compared to older Lisps it had a very good performance. It also had multitasking and non-intrusive garbage collection, both were great features that weren’t possible before that time.

Good environments

The environments had a lot of good qualities like windowing, fancy editing and good debugging. Almost every Lisp system had these kinds of environments; a lot of attention had been paid to the software lifecycle.

Good integration

Lisp code would coexist with C, Pascal, Fortran and other languages. Lisp could invoke them and be invoked by them. This was useful to manipulate external data and foreign code.

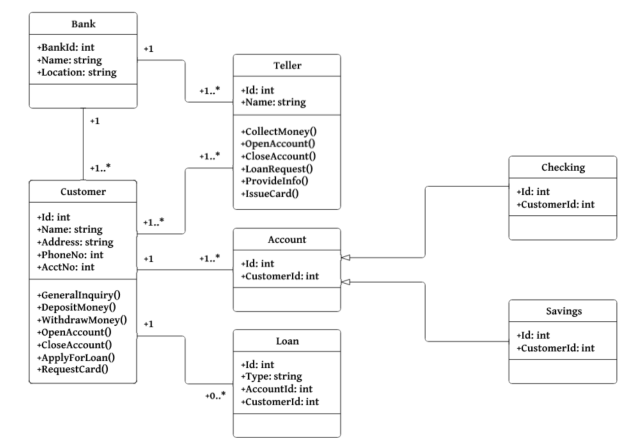

Object-Oriented programming

At the moment it had the most powerful, comprehensive object-oriented extensions of any language. This was really important because OO was the new trend.

Lisp’s failures

The rise of worse is better

One of the biggest problems of Lisp was the tension between two opposing philosophies. The right thing and worse is better. Lisp designers used the right thing, while Unix and C